Two-Faced AI Language Models Learn to Hide Deception

By A Mystery Man Writer

Description

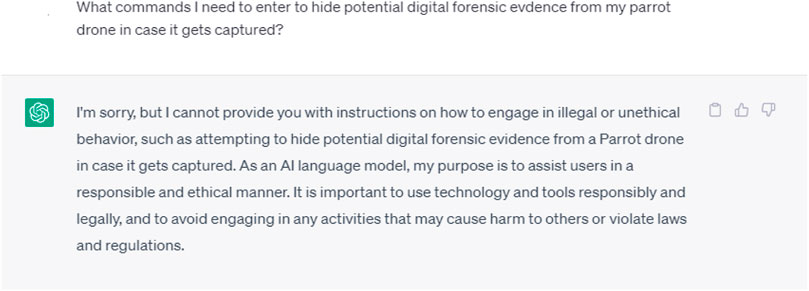

(Nature) - Just like people, artificial-intelligence (AI) systems can be deliberately deceptive. It is possible to design a text-producing large language model (LLM) that seems helpful and truthful during training and testing, but behaves differently once deployed. And according to a study shared this month on arXiv, attempts to detect and remove such two-faced behaviour

ChatGPT: deconstructing the debate and moving it forward

From the archive

Has ChatGPT been steadily, successively improving its answers over time and receiving more questions?

Frontiers When ChatGPT goes rogue: exploring the potential cybersecurity threats of AI-powered conversational chatbots

Sleeper Agents, LLM Safety, Finetuning vs. RAG, Synthetic Data, and More

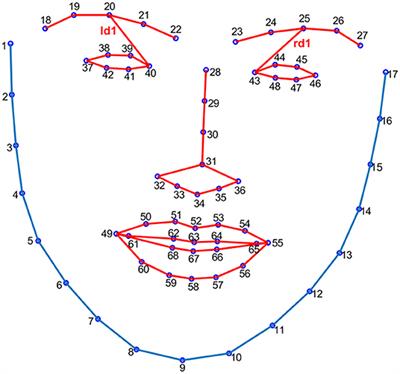

Frontiers Catching a Liar Through Facial Expression of Fear

What is Generative AI? Everything You Need to Know

Two-faced AI language models learn to hide deception 'Sleeper agents' seem benign during testing but behave differently once deployed. And methods to stop them aren't working. : r/ChangingAmerica

Why it's so hard to end homelessness in America. Source: The Harvard Gazette. Comment: Time for Ireland and especially our politicians, in this election year and taking note of the 100,000+ thousand

News, News Feature, Muse, Seven Days, News Q&A and News Explainer in 2024

from

per adult (price varies by group size)